Le-Picasso #

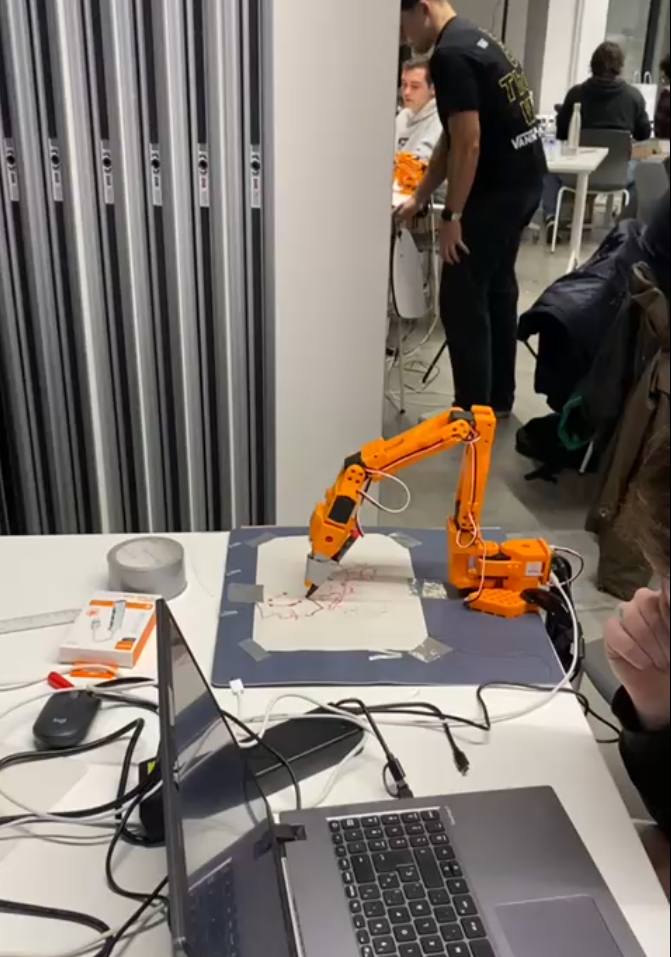

Le-Picasso is an end-to-end project that allows users to control a robotic arm (SO-100) and make it draw objects using just their voice. This project was developed during the LeRobot Bilbao Hackathon with my colleagues Lingfeng, Dani, Andrei and Martín.

Highlight: End-to-end pipeline combining real-time NLP, robotics and a web interface.

How it works #

The architecture connects a user-friendly frontend with advanced AI audio processing and physical hardware execution:

- Web Application: Users interact with a graphical web interface where they can record and send their voice commands.

- AI Audio Processing: An AI model processes the incoming audio in real-time.

- Instruction Extraction (NLP): We utilized Named Entity Recognition (NER) to parse the natural language input and extract the specific drawing instructions (e.g., understanding that “draw a house” means the target object is a “house”).

- Robotic Execution: The parsed commands are sent to the robotic arm via the LeRobot framework, which then physically executes the task by drawing the requested object on paper.